Chapter 7 is called “Mechanisms in Programs and Nature.”

Read the whole chapter here.

One way of seeing how the evolution of simple programs might have something to say about natural systems is to compare the images of growth, patterns, etc in natural systems with certain steps in the evolutions of particular cellular automata. Doing this, we see some remarkable similarities, which seem to—

“…reflect a deep correspondence between simple programs and systems in nature.” [p. 298]

Just as simple programs with different rules can have similar behavior (think Wolfram classes), so do certain systems in nature which seem very different behave in similar ways. The connection between the two comes down to components: whether it is built of molecules or black and white cells, the same universal features should be present in the behavior of the system — in other words, the mechanisms are the same.

Apparent randomness is common in nature. This section endeavors to explain how this randomness could emerge, by thinking of a natural process as the evolution of a simple program.

The result is three basic mechanisms for randomness: randomness introduced by interaction with the environment, randomness introduced by initial conditions only (no interaction, no intrinsic), and intrinsic randomness produced by the rules (no interaction, largely independent of initial conditions).

The first two mechanisms are dependent on the environment for the apparent randomness in their evolution. The third is not. Wolfram posits this third mechanism is responsible for a large fraction (if not essentially all) the randomness present in the natural world.

Example: a boat bobbing up and down on a rough ocean. However, the true origin of the ocean’s randomness may itself have a different mechanism.

Example: Brownian motion, say, placing a grain of pollen in a liquid, then observing its motion. However, the origin for the pollen’s randomness (constant bombardment by molecules of liquid) may have a different mechanism.

Example: A radio receiver producing noise, which in most cases is a highly amplified version of the microscopic processes inside the receiver.

However, it has been discovered that output from miscroscopic physical processes doesn’t always produce the best possible randomness, and in fact, output from devices meant to generate randomness at this level show significant deviations from randomness. More in general, interaction with the environment for randomness generation is imperfectly random, since the previous state of a device can influence the next state, so that the device is not in the same state when it receives each piece of input. QM states it takes an infinite amount of time for systems to “relax” to normal states, so we should expect that randomness generation in this manner is intrinsically imperfect.

Most generally, the environment-interaction mechanism for generating randomness is superficial since the randomness is caused by some other random process, about which we know nothing except that it is “random.”

Exampe: roll a ball along a rough surface. The rough surface is unchanging, hence one can see it as the initial conditions for the path of the ball on which to roll, including the initial speed of the ball.

Such systems rely on their final state to be very sensitive to initial conditions. Other examples are coin tossing, wheels of fortune, roulette wheels, etc.

Some systems are so sensitive to initial conditions that no machine with fixed tolerances could ever be expect to yield repeatable results. This is the basis of chaos theory. Indeed, in recent years the mathematics of chaos theory has assumed that in practice, random digit sequences in initial conditions are inevitable.

However, this mathematical idealization is simplistic, considering that, in the example of a kneading machine, if one starts out with two points one atom apart, after thirty steps these two points would be one meter apart. This implies that one cannot make arbitrarily small changes in position, since atoms have finite size.

“And indeed in any system, the amount of time over which the details of initial conditions can ever be considered the dominant source of randomness with inevitable be limited by the level of separation that exists between the large-scale features that one observes and small-scale features that one cannot readily control.” [p. 313]

Example: Three-body problem

Example: The rule 30 CA:

The center column of rule 30 is considered random by most definitions, though there are some definitions of randomness which exclude randomness that is generated from a simple procedure. Wolfram argues, however, that if this definition were to hold most natural processes would be inable to produce randomness, when we know they in fact do.

The center column of rule 30 is considered random by most definitions, though there are some definitions of randomness which exclude randomness that is generated from a simple procedure. Wolfram argues, however, that if this definition were to hold most natural processes would be inable to produce randomness, when we know they in fact do.

Instead of defining randomness in that way, it makes more sense to define it as whether or not, given a particular sequence, one can easily determine from the sequence what the rules of its generation are.

“…the fact that simple cellular automaton rules are sufficient to give rise to intrinsic randomness generation suggests that in reality it is rather easy for this mechanism to occur. And as a result, one can expect that the mechanism will be found often in nature.” [p.321]

Wolfram postulates that whenever a large amount of randomness is generated in a short time, intrinsic randomness generation is the likely culprit. Also, intrinsic randomness generation is the most efficient way of getting randomness, as environmental randomness progressively slows the system with the addition of each new component.

One can detect intrinsic randomness by looking at its repeatability: if such randomness can be reproduced, it is likely intrinsic, because environmentally induced randomness would make a repeat run impossible, since the environment will change in each subsequent run.

EXAMPLE: Calculate the digits of pi. While they are seemingly random, you get the same answer if you start the calculation over, if you calculate it from atop a mountain, if you calculate it from the bottom of the ocean or from the atmosphere of Titan (good luck with that one!), and so forth.

elements, taking the average may make the system look smooth and continuous. For example, air and water appear continuous only because they are composed of such a large number of small, discrete components. However, the “key ingredient” that makes discrete systems with large numbers of components of appear continuous is often randomness.

Compare and contrast crystals (no randomness, appear structured and non-smooth), and non-crystalline solids, which appear smooth.

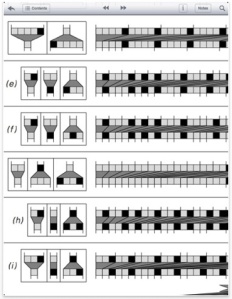

Random walks start with a discrete particle and then at each step of the evolution moving the particle left or right. Random walks can take place in as many dimensions as you like, with “left or right” being replaced by the direction of the various axes. So in one dimension it can move left or right or rather positive or negative along the number line, in two dimensions it can move {positive, positive}, {positive, negative}, {negative, positive}, {negative, negative} where each pair represents the direction along each axis, and so on.

With enough particles, random walks start to look smooth.

Other systems that look smooth given enough components are aggregation models, which are built by starting with a single black cell on a grid and adding one new cell on each step. However, the randomness involved in these examples are inserted from the outside at each step of the evolution of the system.

In these examples, the intrinsic randomness generations in the systems is able to make the systems behave in seemingly continuous ways. [p. 333]

However, not every system that involves randomness will ultimately produce smooth patterns of growth. “As a rough guide,” continuous patterns of growth seem possible only when there’s enough time over the life of the evolution, and small-enough scale changes.

As in the last section, we realize that discrete systems can appear continuous. The vast majority of traditional mathematical models have been based on such continuity. But in nature one also sees discrete behavior, like skin/coat pigmentation. This would seem to suggest that continuous models can sometimes yield behavior that appears discrete. For example, the discrete transition between water and steam when the water boils.

One can investigate such discrete transitions with one-dimensional CAs, by making continuous changes in the initial density of black cells. What happens is that when the initial density of black cells is less than 50%, only white stripes survive. When it’s above 50%, the black stripes survive.

In contrast to the approach of traditional science, programs immediately provide explicit rules instead of just working with constraints that have to be satisfied.

The problem with satisfying constraints is that, in practice, working out which pattern of behavior satisfies a given constraint usually seems too difficult for it to be something that routinely happens in nature. [p. 342]

Programs come with rules that explicitly tell you how a system will be worked out. Constraints don’t tell you the procedure for working out the system that satisfies them, however. A process based on picking out patterns randomly is incredibly unlikely to yield results even close to satisfying the constraints. Iterative procedures do better, but it usually only yield an approximate result, and often those procedures ‘get stuck’ during the iterative process and do not yield the behavior we’re looking for.

“One can look at all sorts of other physical systems, but so far as I can tell the storiy is always more or less the same: whenever there is behavior of significant complexity its most plausible explanation tends to be some explicit process of evolution, not the implicit satisfaction of constraints.” [p. 351]

In the book we’ve found that some programs show highly complex behavior, and others rather simple behavior like uniformity, repetition, or nesting. And there doesn’t seem to be any direct correspondence between the complexities of the rules and the complexity of the resulting behavior.

Most of the time we can’t tell from the rules what kind of behavior a system will exhibit. But sometimes, like in the case of complete uniformity in the rules (all states going to one state), we can. Repetition is the next simplest form of behavior. This will tend to happen with cyclic rules, like {State1 -> State2 -> State1}. Also, sometimes the basic structure of a system will only allow a limited number of states. The next simplest form of behavior is nesting. This process can be described by every element branching into smaller and smaller elements. The rules for these systems are scale-invariant, so regardless of the physical size, the rules applied are the same. But also there is a discrete splitting/branching in which several distinct elements arise from an individual starting element. Nesting can also arise as larger elements are built up from smaller ones.

These mechanisms are in some sense genuinely different, yet all can be captured by simple programs.

We are pleased to announce the 10th annual Wolfram Science Summer School (formerly the NKS Summer School), and would like to invite you to apply to the three-week, tuition-free program, which runs June 25 through July 13 in Boston, Massachusetts. The Wolfram Science Summer School is hosted by Wolfram Research, makers of Mathematica and the computational knowledge engine Wolfram|Alpha, and Stephen Wolfram, world-renowned author of A New Kind of Science (NKS).

We are pleased to announce the 10th annual Wolfram Science Summer School (formerly the NKS Summer School), and would like to invite you to apply to the three-week, tuition-free program, which runs June 25 through July 13 in Boston, Massachusetts. The Wolfram Science Summer School is hosted by Wolfram Research, makers of Mathematica and the computational knowledge engine Wolfram|Alpha, and Stephen Wolfram, world-renowned author of A New Kind of Science (NKS).